Booking travel with ChatGPT? Read this first

ChatGPT, Gemini and other AI tools are becoming increasingly popular for travel planning. It’s understandable. They’re fast, mostly free, and the answers sound convincing. But there’s a serious problem: AI verifies nothing. It generates plausible-sounding information, recommends websites without checking whether they’re legitimate, and takes zero responsibility when things go wrong.

The damage is already measurable. According to McAfee research from 2025, global losses from AI-driven travel scams have reached an estimated $13 billion. The average victim loses close to $1,000 per incident. And it’s getting worse, not better.

We touched on this issue in a recent article on DutchTravelBloggers, specifically in chapter 3, which covers the trust problem with AI-generated travel content. It’s time for a more detailed look at the real dangers – with concrete examples.

AI promotes scam websites without knowing it

The most dangerous thing about AI isn’t that it lies – it’s that it doesn’t know it’s lying. Tools like ChatGPT and Google Gemini generate answers based on patterns in text. The system has no concept of what makes a website trustworthy or fraudulent. Scammers know this.

Using simple website builders, they can launch a professional-looking booking site within minutes. Logos copied from real brands, AI-generated photos, fake reviews, a convincing domain name, and a fraudulent phone number for “customer support.” AI recommends that site without any verification whatsoever.

The traveler clicks through, books a flight or hotel room for hundreds or thousands of euros, and the money disappears. There’s no customer service to call back, no desk to file a complaint, and in most cases, no refund.

The hotel that looks nothing like the photos

A variation that’s becoming increasingly common: the hotel exists, but looks nothing like the AI recommendation suggested. Scammers use AI image editing tools to fabricate photos entirely. Construction sites disappear, ocean views are added, mouldy rooms are transformed into luxury suites.

Travelers arrive on-site to find something that bears no resemblance to what they thought they booked. In documented cases, hotel staff themselves admitted the online images were deliberately fabricated. By that point, you’re already there and your money is already gone.

The attraction that doesn’t exist

AI hallucinates. That’s a technical term for something very concrete: the system invents facts that have no basis in reality, presenting them with the confidence of a guide who’s been there themselves.

In January 2026, an AI-generated blog from an Australian tour operator sent groups of tourists to hot springs in northern Tasmania. Those hot springs don’t exist, as CNN reported at the time. People showed up anyway, drawn in by the detailed description. In Malaysia, an elderly couple traveled 300 kilometers from Kuala Lumpur to a village in Perak to visit a cable car they’d seen in a video. The cable car was entirely AI-generated and has never existed. In Peru, a local guide had to warn two tourists who were about to head alone into a remote canyon based on an AI-generated travel plan. The canyon doesn’t exist.

These are not isolated incidents. Tourism research suggests that around 90 percent of AI-generated travel plans contain errors, ranging from closed museums to completely invented attractions and scam hotel websites.

The fake customer service line

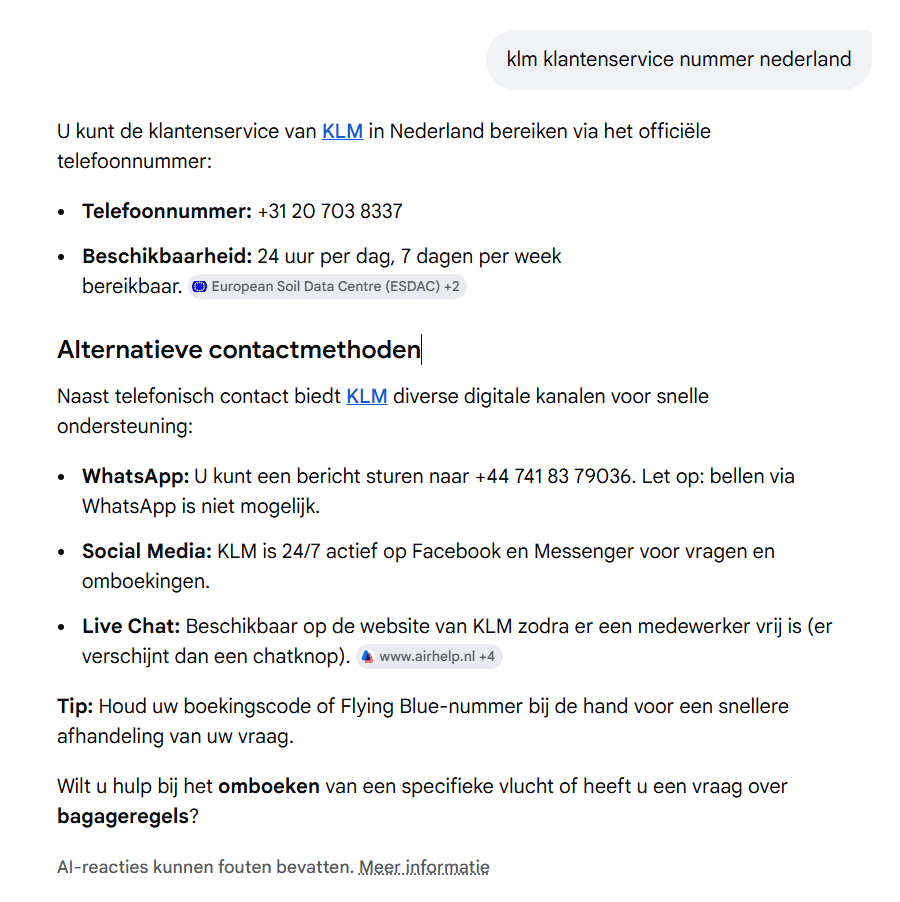

One of the fastest-growing scams right now involves fake phone numbers surfacing through AI search results. Someone searching for an airline’s customer service number can end up with a fraudulent number via an AI overview. We recently covered this in detail in our article on fake KLM numbers circulating via Google, a scam that’s particularly relevant right now, with thousands of travelers trying to reach airlines due to flight cancellations caused by the ongoing conflict in the Middle East.

But it goes beyond phone numbers. Scammers now use voice synthesis technology to mimic the voices of real airline employees. They call travelers, pose as customer service agents, and extract credit card details or booking references. The voice sounds genuine. The tone is professional. Many don’t realize until it’s too late.

Nobody is accountable and there’s nowhere to turn

When something goes wrong with an AI recommendation, there’s no one to hold responsible. No party that’s liable, no support line that actually helps. Many fraudulent platforms deploy AI chatbots as fake customer service, deliberately preventing victims from ever reaching a real person. The bottom line is that nobody is responsible for your loss — except you.

What you can do instead

AI works well as a source of inspiration. As a booking tool, it’s unsuitable and in many cases potentially dangerous. Stick to these basics:

- Always book directly through the official website of an airline or hotel – never through a link recommended by AI.

- Always verify any phone number you find via a search engine or AI overview against the official website.

- Cross-check attractions and restaurants using multiple independent sources: Google Maps, Tripadvisor, or travel bloggers who have actually been there.

- Pay by credit card where possible, so you can file a chargeback in case of fraud.

- Never trust an AI travel plan blindly. Use reliable travel guides or travel agents for the final planning.

AI knows a lot. It just doesn’t know what’s real.